Joshua Jaekel

I am currently a graduate student at the Robotics Institue at Carnegie Mellon University. I work in the Robot Perception Lab under the supervision of Dr. Michael Kaess. My primary research interests involve robot perception and autonomy, with a focus on creating systems robust enough to be deployed in the wild. I am currently competing in the DARPA Subterranean Challenge as a member of Team Explorer. I hope you enjoy taking a glimpse at some of the projects I've been proud to work on!

Education

Carnegie Mellon University

University of Windsor

Highlighted Projects

Robust Visual Inertial Odometry

This project has been the focus of my research in the Robot Perception Lab at CMU. The goal is to use information from multiple cameras with non-overlapping fields of view and an inertial measurement unit to create a VIO algorithm capable of maintaining a state estimate where current state-of-the-art algorithms fail. I developed a feature based VIO algorithm with a novel outlier rejection scheme that is able to jointly select features across multiple cameras to use in a back-end optimization.

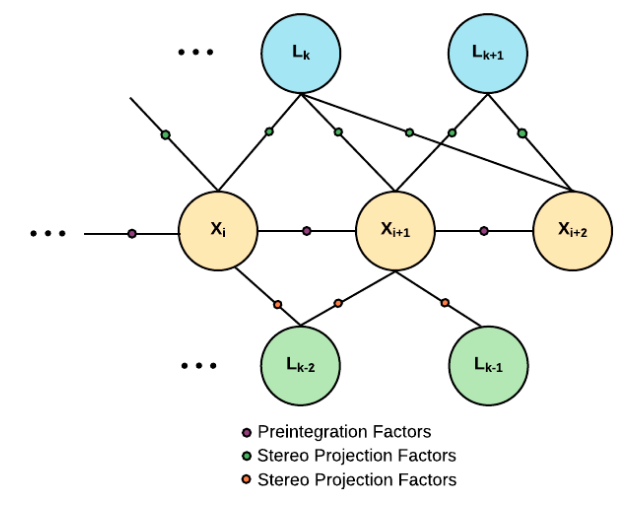

The backend of the state estimator is a factor graph which includes projection factors from features tracked in each stereo pair as well as IMU preintegration factors. The pipeline accounts for uncertainty in the extrinsic calibration between cameras in both the feature selection step as well as the backend optimization. Results for this project are currently submitted for peer review. I look forward to sharing more details at the time of publication!

Multi-Stereo Direct Visual Inertial Odometry

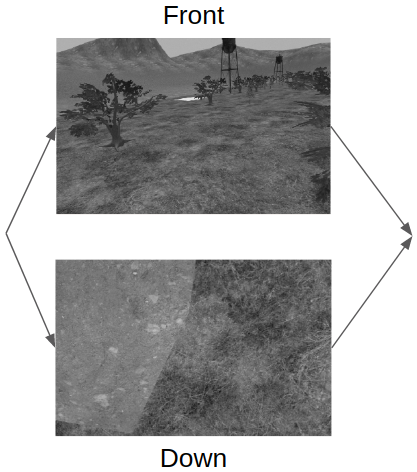

This work was completed as part of a group project for the Robot Localization and Mapping course at CMU. The project extended my main research which focussed on indirect methods for multi-camera VIO.

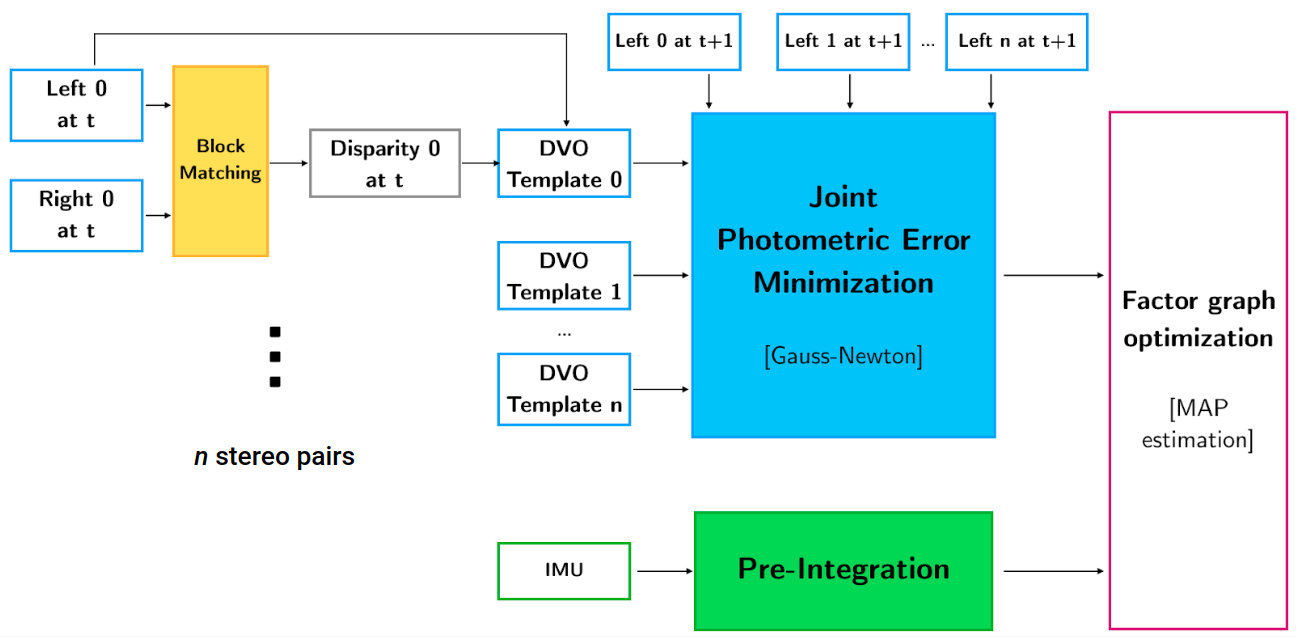

The inputs to our pipeline included data from an IMU and images from an arbitrary number of stereo cameras with non-overlapping fields of view. The state estimate was calculated using a factor graph based optimization which used IMU preintegration factors and joint direct visual odometry factors. The joint DVO factors were pose-to-pose constraints which jointly minimized the photometric error between images in all the stereo pairs.

Planning for Coverage Under Uncertainty

This work was completed as part of a group project for the Planning for Robotics course at CMU. The goal of the project was to generate plans for a robot given noisy odometry and a known localizability map. The localizability map consisted of range beacons placed throughout the environment. The goal of the planner was to explore the environment while attempting to minimize both its distance travelled and state uncertainty.

The robot generates plans which deviate from the shortest path in order to reduce its state uncertainty by travelling near range beacons. It also performed collision checking within 3-sigma of its estimated state to maintain safety even under state uncertainty.

Publications and Workshops

Jaekel, J., Kaess, M. (2019, November) Robust Multi-Stereo Visual-Inertial Odometry. Workshopon Visual-Inertial Navigation: Challenges and Applications at IROS 2019.

Jaekel, J., Ahamed, M. J. (2017, March). An inertial navigation system with acoustic obstacle detection for pedestrian applications. In 2017 IEEE International Symposium on Inertial Sensors and Systems (INERTIAL).

Liu, J., Jaekel, J., Ramdani, D., Khan, N., Ting, D. S. K., & Ahamed, M. J. (2016, November). Effect of geometric and material properties on thermoelastic damping (TED) of 3D hemispherical inertial resonator. In ASME 2016 International Mechanical Engineering Congress and Exposition.

Interests

While I've been lucky enough to turn several of my interests into full-time research, I do still have some interests which keep me busy outside of the Lab. I'm an avid sports fan, and although it may be difficult at times I'm a strong supporter of all the Detroit area sports teams. I've also been a volunteer with the Hugh O'Brian Youth (HOBY) Leadership Organization since 2012.